Learn About Dual HDMI Monitors And GPU/CPU Performance

There are lots of great reasons to run dual monitors at your workstation.

- Multi-tasking becomes much easier when you can keep two separate applications open, in full display size, at the same time.

- Editing video (or even images) is a pleasure when you don’t have to have all of the editing tools eating up space on the screen with the video frame or photo.

- Working with multiple documents is a breeze if you can cut and paste from one screen right to the other instead of switching back and forth.

- Any type of comparison work is simpler when you don’t have to toggle between windows or try to resize them so you can see them on the same screen.

- Working from video tutorials is no difficult if you can execute the video’s instructions on your second screen.

- And if you’re taking a break from work, gaming is more exciting than ever when you have a double-sized display in front of you.

And we haven’t even mentioned that many studies have shown that dual monitors improve workers’ activity by as much as 50%, or that it’s a great luxury to be able to watch a movie on one screen while you’re pretending to do work on the other.

The idea of using two monitors should make sense by now. The question that often comes up, though, is “Won’t running two monitors slow down my machine?”

It shouldn’t, at least in most cases. Let’s see why – and what you can to do make sure there isn’t an issue.

Monitors and Resource Usage

When people worry about using up their machine’s resources they’re usually thinking about the CPU, the central processing unit that runs a computer. No matter how fast your CPU may be, it can only handle a certain number of simultaneous “processes” (in simple terms, demands for the processing unit’s resources) before it locks up or crashes. In most cases, things don’t get that far; as CPU usage approaches 100% the machine will slow down noticeably, and most experienced users will know to close some of the programs they’re running (or get a better CPU).

But CPU usage isn’t an issue when it comes to monitors, whether you have one, two or more. The slight demands of a monitor are no big deal to the processor. Actually, for most computers the monitor doesn’t even “talk” to the CPU; any necessary commands are sent through the machine’s graphics card or GPU (graphics processing unit). If there is a simple problem with your machine’s specs it’s likely to be not enough RAM, because the graphics processor uses some of the RAM for display memory. 8 or 16 gigs of RAM is what you’ll probably need for dual monitors.

The GPU is where most bottlenecks develop. One quick clarification: the GPU, the special processor responsible for displaying graphical elements like images and video, is usually one of the components on a computer’s graphics card. Some machines have separate GPUs that aren’t mounted on a graphics card (or are integrated with the CPU), but the terms “GPU” and “graphics card” are normally used interchangeably in discussions like these.

Everyday graphics displays don’t overly tax the GPU, assuming you have a modern graphics card that can handle the demands you place on it. In fact, most newer cards are built to support multiple monitors, so they’re built to be able to output dual signals. Where the GPU really has to work hard, though, is in decoding and rendering three-dimensional video and animations, which is why 3D designers and gamers require high-end graphics cards in their machines.

That brings us to the important point: you could run into performance issues with your GPU if you’re trying to do 3D work or play the latest video games on dual monitors, since the processing required is very resource-intensive. Those problems, whether they’re caused by the GPU’s inability to properly handle display resolutions, frame rates or something else, will end up slowing down performance greatly.

What can designers who work in 3D or serious gamers do to solve the problem? They need higher-end equipment. One approach is to buy a high-end (and more expensive) gaming graphics card (like a GeForce GTX 970 or Radeon R9 280) built to support multiple monitors displaying 3D video. The other is to purchase a second graphics card and run each monitor off its own GPU. That’s not quite as easy as it sounds, since for optimum performance you need matching cards and the proper matching hardware (labeled CrossFire for AMD cards and SLI for NVIDIA cards), so you could be looking at an even big price tag to upgrade.

Dual Monitors for Normal Users

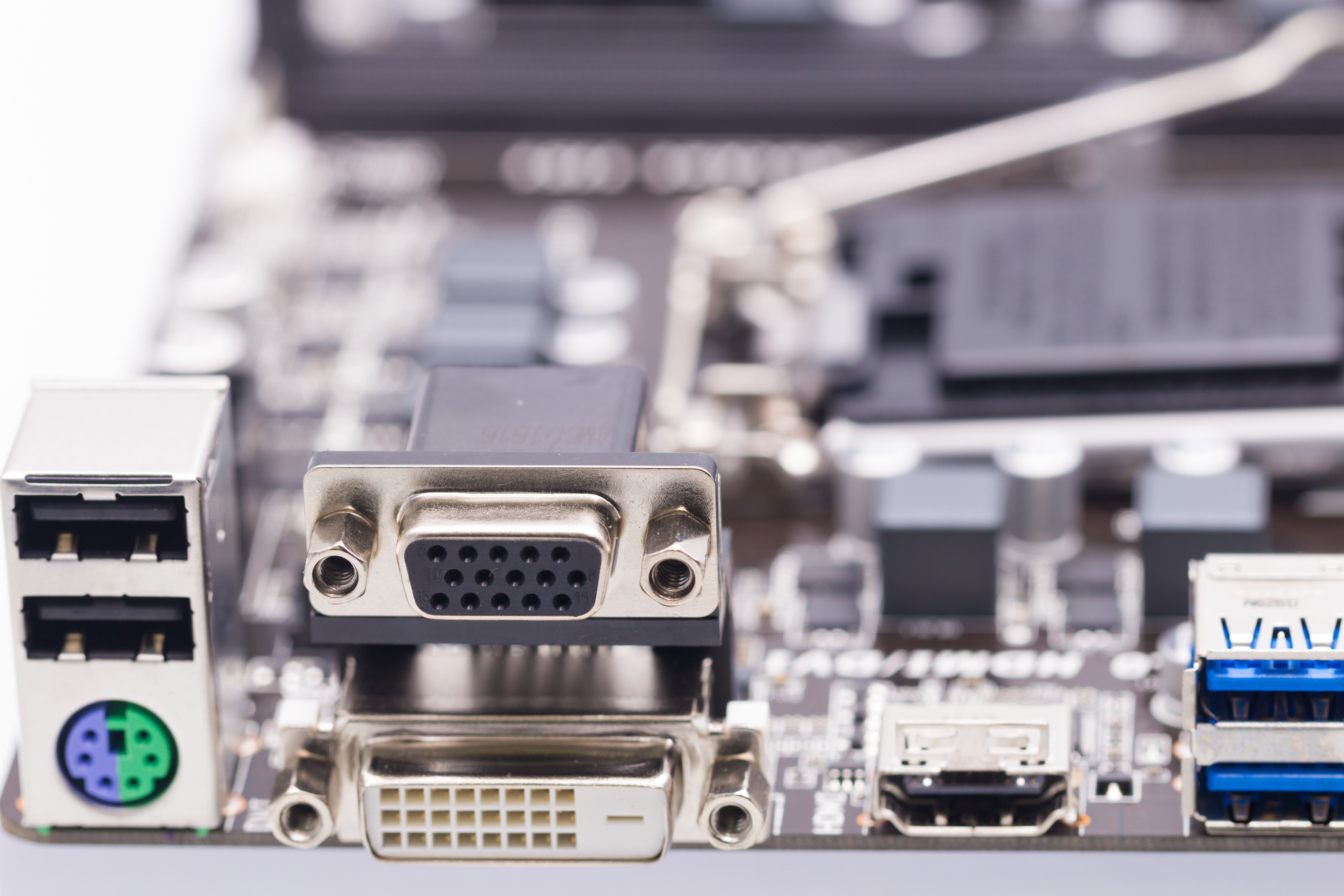

The majority of today’s video cards have two or three ports for DVI, HDMI and/or VGA outputs (one port may be for DisplayPort as well). That means all you have to do is run dual monitors is to feed one with an HDMI cable and the other with a DVI cable; they’re the best match. HDMI, DVI and DisplayPort are all digital formats so their video signals will look pretty much the same, but VGA is an older analog format and the video won’t come close to the quality of the digital signals, so avoid using the VGA port if possible. If both of your monitors require HDMI feeds, just use a DVI-to-HDMI adapter cable for the second.

The good news is that you don’t have to worry too much about required specs for dual monitor operation. Any modern integrated video card with multiple outputs will do just fine to run two 1900x1200 displays, since all that’s required is 24Mb of memory for each display and today’s cards all have well over 48Mb available. Some cards, like the ones made by nVidia, support higher resolutions with multiple DVI outputs. You won’t have to worry about your computer specs, as long as you have enough RAM (as suggested above, 8 gigs or more) and a modern CPU.

One final note: what happens if everything’s hooked up, but the two video displays still look mismatched? Chances are that the monitors are set for different color formats, so just set both to the same color depth in your display settings, and you should be all set.